A Line in the Sand: Artificial Intelligence and Human Liberty

Matt Watkins

Subscribe on Apple Podcasts Spotify YouTube Pocket Casts

You have to be working for the benefit of people. It cannot be simply about accumulating wealth or accumulating power.

Part of the appeal of artificial intelligence is that it seems to stand above the messy world of human decision-making.

The criminal justice system has no shortage of that kind of decision-making. And AI tools are being put forward as solutions in everything from police departments and prisons to probation offices and courtrooms.

But how do we separate AI’s real promise from the hype? And how do we ensure the technology helps, rather than sets back, the cause of fairness and justice?

Making AI work in the service of justice is precisely the mandate given to Roy Austin, Jr., the inaugural director of the Howard Law Artificial Intelligence Initiative. Austin is a former Deputy Assistant Attorney General with the Civil Rights Division at the Department of Justice under President Obama and, until last year, the Vice President of Civil Rights at the tech giant Meta. He’s also senior advisor to the AI and Justice Consortium, of which the Center for Justice Innovation is a founding partner.

In this episode of New Thinking, Austin argues AI isn’t so much a story about technology. “It’s human beings who decide what data goes in. It’s human beings who decide the algorithms and how they’re going to work,” he explains. “And it’s human beings who are impacted the most by this.”

For more information on our work with AI in the justice system, click the link below:

AI & JusticeFor a transcript of the episode, see below.

Matt WATKINS: Welcome to New Thinking from the Center for Justice Innovation. I’m Matt Watkins.

Part of the deep appeal of artificial intelligence is it seems to stand above the messy world of human decision-making.

The criminal legal system has no shortage of that kind of decision-making. And AI solutions are being put forward for everything from police departments and prisons to probation offices and courtrooms.

But how do we separate AI’s real promise from the hype? And how do we ensure the technology helps, rather than sets back, the cause of fairness and justice?

There are few people better qualified to answer those questions than today’s guest.

Amongst the many highlights on his resume, Roy Austin, Jr. is a former Deputy Assistant Attorney General with the Civil Rights Division at the Department of Justice under President Obama. Up until about a year ago, he was the Vice President of Civil Rights at the tech giant, Meta. And beginning this year, Austin is the inaugural director of the Howard Law Artificial Intelligence Initiative.

Its writ is to bend the arc of AI toward justice.

Here is my conversation with Roy Austin.

WATKINS: On paper, you really present as an institutionalist: you’ve had a number of prominent positions at the DOJ, you were a partner at a big law firm, you were the VP of Civil Rights at Meta. But you’re also someone who seems quite skeptical of institutions—and of institutional power, particularly the way that power can be wielded against the marginalized.

I’m wondering if you could square that circle for me a little bit, if you agree that there’s a circle to be squared.

Roy AUSTIN: I think it’s fair if you just look at my resume and you say, “Yeah, he worked at the Department of Justice and, yeah, he worked at Meta, and he worked at law firms. So yes, he believes in institutions.” But if you dig a little bit deeper into what I did at each of those places, I think you would notice that really my job has always been to challenge institutions.

You take my work at DOJ, and I started as a federal prosecutor prosecuting civil rights cases. I was prosecuting hate crimes and police brutality cases—not your average cases. When I was at law firms, I was building civil rights practices where I was challenging what was happening with respect to law enforcement institutions.

And then at Meta, I had an incredibly unique role—one that didn’t exist before and not sure when it’s going to exist again—but this vice president of civil rights. And I really saw the role as an opportunity to force the company to reflect on civil rights issues and how what it was building was impacting communities.

So, really, every time I’ve been in an institution, it has been in some ways to challenge what is normally thought of of that institution.

WATKINS: Now with this recent gig at Howard Law with the Artificial Intelligence Initiative, the institution you’re taking on to some degree is artificial intelligence and the tech companies. I’ve seen where some people liken the kind of power that the tech companies have now, the sort of international reach of them, to empires of the nineteenth century.

Do you feel like what you’re taking on now, that sort of power, is almost qualitatively different in scope?

AUSTIN: I don’t know that it’s different in scope. I think this is an opportunity without real limitations to build something, to challenge these tech companies and what they’re claiming and what they’re saying, and potentially even to build our own. And so really, this is new to me because I’m not stepping into an institution where there are those types of guardrails and I’m building something here that I deeply believe needs to be built.

WATKINS: I guess just to push you a little bit on the nature of the challenge: certainly in this country, the way that we’ve seen that the big tech companies—or tech oligarchs, as I’ve seen you refer to them—are very much wedded to the power of the federal government, very much wedded to the financial sector of this country.

That just seems like, and I don’t want to start in a dispiriting manner, but just to have a sense of what we’re up against: if the goal is to rein in the sector somewhat, or put it at the service of people, it just seems like a difficult task.

AUSTIN: Yeah, so I wouldn’t say I’m trying to rein them in.

I’m trying to make them do what’s right. And I have very strong feelings about what’s right, and that is, you’ve got to be working for the benefit of people. It cannot be simply about accumulating wealth or accumulating power.

So, definitely not trying to rein them in, but throughout history, look at businesses who wanted child labor. Yeah, they would’ve been more profitable had we allowed them to have child labor! But is that the right thing to do and destroy the lives of certain children?

And so what we have now is a technology that has the ability to both do amazing good, but also to destroy a lot of things. And I just don’t think we’re paying enough attention to what it could destroy and what it is destroying and the lives that it is harming.

And we don’t have a north star that, in my opinion, makes a lot of sense. So you challenge those folks, and you challenge those organizations.

WATKINS: What do we know right now about how AI is being used in the justice system already or how it could be used? It’s obviously a huge sector itself, the criminal justice sector. We know that AI is already being aggressively marketed to police departments, parole, probation, corrections departments.

Do we have a sense of the landscape?

AUSTIN: We have a sense of it, but that’s maybe the first problem here is we have a sense of it, but there’s so little transparency in how it’s being used, there’s so little community involvement.

What we know is they’re doing a ton of facial recognition work right now, an enormous amount, regardless of the possibility of false positives and false negatives in the use of that technology. We’ve become a surveillance society, and I don’t think that people have agreed to that.

We’ve had this idea of predictive policing for quite some time. There’s no real proof case for it, other than: “Oh, well, we went there before and so the data tells us we can go there again and we can find more of whatever the contraband may be.”

But it ignores the fact that there’s always been racial and ethnic discrimination in where police have shown up.

So, that’s my concern and that’s what we’re seeing, and now we’re hearing more and more about them trying to find ways to take shortcuts on writing reports and writing search warrant affidavits and other things like that. We still need human beings with thoughtfulness and common sense and smarts—just straight smarts to be able to read these things.

Even when I use AI, I have to read it and be like… And there’s never been a case where I’ve read it and I’ve been like, “Oh yeah, you nailed it, exactly 100%.” There are always mistakes; there are always things that my own judgment says are wrong.

So, enormous use, enormous number of vendors running in and saying, “Hey, we got the next greatest thing here,” enormous amount of money being spent, and I would say wasted, on this technology, with really no proof of efficacy.

WATKINS: Are there lessons we can learn from the earlier waves of technology that have washed over the justice sector—often with very big promises that often didn’t come to pass in quite the way they were promised?

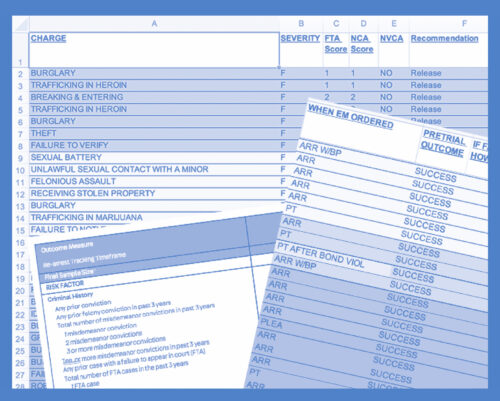

I’m thinking specifically of risk assessment algorithms that were meant to automate who was released or detained pretrial, or electronic monitoring that was meant to be an alternative to incarceration.

Are there lessons there?

AUSTIN: Yeah. I mean, Matt, the biggest one is the risk, the risk profiles that have been created. I mean, it’s not like we have removed recidivism because we have that. I mean, you don’t know who’s going to commit the next crime!

We’re not spending the time, the resources, on really thinking about what do we really want here? Do we want people to spend 20 years in prison or jail and come out worse?

We did a report back when I was at DOJ with Memphis where we found racial discrimination basically across the board at every level of decision-making in the juvenile justice system. But that’s the data, because that’s the only data we have, that we are now using to train our artificial intelligence.

And we have an administration that is anti-data in a way that is: if we don’t know the truth, if we don’t dig deep on the data, if we don’t ask those hard questions, if we don’t disaggregate on the basis of race and gender and ethnicity and even sexual orientation, we’re not going to have the kind of data that AI could even have a chance at being able to give you good answers on.

We’re getting the answers that we want—or we’re getting “answers”—and we’re saying, “Oh, a computer did it so it’s not discriminatory.” And that’s—to use a technical phrase—that’s bullshit.

WATKINS: There’s a way that AI, just like risk assessments before them, is presented as a kind of magical solution almost that takes it out of the human realm, whereas, for better or for worse, we can’t get out of the human realm, right?

AUSTIN: None of it is magical. It’s human… Look, it’s cool! I will admit—that’s why I’ve decided to spend some more time in this field. I think it’s cool, it’s promising, it’s interesting—but it’s not magical.

It really and truly, it’s human beings who decide what data goes in. It’s human beings who decide the algorithms and how they’re going to work. It’s human beings who decide how much they’re going to read on the output to push back when the output gets it wrong. And it’s human beings who are impacted the most by this.

And that’s why we need more and more people who are asking the hard questions and not just simply buying what they’re selling.

WATKINS: Right, but let’s sit with the cool and the interesting then maybe for a second.

Do you want to make your best strong case for how AI could improve the administration of justice in this country, specifically when it comes to the issues of fairness and equity that I know you’ve devoted your whole career to?

AUSTIN: One early thing that I remember we were working on, and I haven’t seen AI being used in this way, but a woman by the name of Lynn Overman, who I worked with at the White House, she started talking about the idea of: we have these people who we call—and this is pejorative—frequent flyers.

And those are people, usually low-income individuals, who are constantly in trouble with the law and constantly ending up—both low-level offenses, a lot of shoplifting, a lot of public nuisance-type stuff. They’re also the same people who end up in our hospitals and our emergency rooms with great frequency.

WATKINS: Right. Often people with real mental health problems, substance use disorders, unhoused.

AUSTIN: Exactly. And law enforcement spends an enormous amount of time trying to work with, or just arrest and lock up and put away, these people. If we can combine that data to figure out how we create early interventions so that we can really help—you provide people with a house, you provide people with a job, you provide people with… Even we’ve seen as little as $1,000 of income can prevent someone from slipping into a ridiculous hole.

I recently saw Vicki Schultz, who’s the head of Maryland Legal Aid. She was showing how they’re using AI in ways that are just amazing. So your average landlord-tenant case is actually a pretty straightforward case. If you can input that information and come up with a complaint right away and ensure that people are not pushed out of their houses by bad landlords, that’s an amazing use of it—for people to get their benefits.

We’ve seen it used with intake, possibly in human trafficking-type situations. You’re getting all these calls, you’re getting them all times of night. Can you use AI to determine the emergency cases versus the longer-term cases?

So, there are a lot of ways that I can imagine it being used for good. And for good just means for the people in a way that makes sure that more and more people benefit. Again, not for the accumulation of wealth and power, which unfortunately is what you’re seeing more and more of.

WATKINS: I mean, maybe it feels like for jurisdictions thinking about using AI in their legal system, the first question for them to ask themselves is: what do we want to use it for?

AUSTIN: What is our goal?

WATKINS: Rather than just rushing into it. I mean, Robert Oppenheimer famously said of the atomic bomb, nobody considered, “should we do this?” We just said, “Well, we can. It’s technically sweet. Let’s do it.”

I guess I have a concern that that kind of rush can happen with the “magical powers of AI” out there.

AUSTIN: Yeah, no, absolutely. I mean, but that should be everything! That should be: why do we want government? Why do we want fairness and truth and justice? And what do those things mean at the end of the day? And if those are our goal, again, it’s very different from the selfish goals that one is seeing by so many.

And this is the AI optimist out there, the tech optimists out there, who basically ignore that middle step and just say, “Well, at the end of the day, tech’s gonna be good for all of us.” It’s like, “no, no, no—you have to build it to be good for it to in fact be good.” You can’t build it and then say, “Oh, well, now we’re going to fix it.”

If they even want it fixed, because again: they have tons of money, they have tons of power. They aren’t all that worried about, in my humble opinion, they’re not all that worried about its impact on so many other people.

WATKINS: Well, let me run this idea by you when it comes to how we might use AI in the justice system.

Here at the Center for Justice Innovation, we recently put out a paper about AI. We said, yes, there’s lots of positive uses for AI in the system—that you’ve just been talking about—but we said we think that you should draw a line in the sand when it comes to using AI to make decisions that could negatively affect people’s liberty.

You shouldn’t have AI weighing in about whether somebody is incarcerated, for example. And that actually got some pushback.

AUSTIN: Well, and I would need to dig in more, because I don’t know what that means because honestly, if you are asking AI to help you write an arrest report…

WATKINS: Yeah, or a probation report I was thinking too.

AUSTIN: Yeah. I mean, you’ve just technically used AI in a way that will help you incarcerate someone. So I don’t know the direct question of: Here, I put your file… Matt Watkins has just been arrested—I put his file into a computer and asked the computer, “Should Matt Watkins be held pending trial?” That’s a bridge way too far.

Can I put it in and say, “Okay, well, the offense that Matt Watkins has been involved in…. Matt Watkins’s background says that he should be on electronic monitoring versus incarceration because the likelihood of recidivism is X, Y, Z.”

We kind of do that already. And so we can have humans do it badly or we could have computers do it badly. I’d rather we do it well and thoughtfully and transparently and have everyone in the room and crowdsource the idea of, “Okay, what do we really want to weigh and how do we want to weigh it? What questions do we really want to answer and how should those questions be answered?”

WATKINS: No, I entirely take your point that it’s hard to say when does an incarceration decision begin, so to speak. When does that get set in motion? I guess just as a layperson, because I feel very much like a layperson here, I mean, the fact that when you ask AI to write something, they tend to use the word “delve” a lot apparently, and nobody knows why. And their penchant for the em-dash.

AUSTIN: The em-dash, yeah.

WATKINS: But they’re known to hallucinate and go rogue and alignment-faking and this and that. And even the creators of the technology aren’t always sure what’s going on. It just seems to have that machine, for lack of a better word, make decisions as consequential as whether somebody is incarcerated or not seems alarming, I guess.

AUSTIN: Well, we had a room in the D.C. U.S. Attorney’s office called C-10, and that’s where arraignments happened. People who were arrested the night before, they get arraigned and then you have to go in front of the judge and have to read the probable cause statement.

Having AI review and say, “Well, here are the elements of the offense, you’re missing this element,” telling someone beforehand so that you can in fact have an accurate and thoughtful probable cause statement, I don’t know that that’s a bad way to have it. We need to have a longer discussion that goes on for a very long time.

I’m sounding like a prosecutor here, and that’s assuming that I believe that our justice system is fair and is actually doing the right thing. And I have real questions about it at this moment, given how awful this administration is on the rule of law and the effectiveness of any of that stuff.

But I’m just saying that having something that kind of walks through the checklist for you, because we as prosecutors, a police officer would come before us, sit at our desk and say, “Here’s my probable cause statement.” And we’d spend time trying to figure it out and analyze it and say, “Well, can you say a little bit more about the time of day that this happened? Can you actually put in the jurisdiction? You just said, ‘I stopped Pat Jones on Fifth Street.’ Where?

Those kinds of things and having AI help and answer those questions I think is actually better. Again, assuming that we have a justice system that works and then that is fair to all people. And that’s an incarceration decision, in my opinion.

You can use AI to help you formulate a cross-examination that’s going to be effective in a jury. That is another way that AI can be used. And the right way to do that is, “Here’s another tool that’s going to give me more questions to ask and ways to ask them that are going to delve into the truth” (em-dash) if we want the truth. So that’s where… I just don’t know what the line is there.

WATKINS: And then when you say you want a system that is fed only good data. I know from speaking to my colleagues here—researchers and data people—that criminal justice data tends to be in a pretty woeful state and that’s been bemoaned for years. And we’re talking about 3,000 local justice systems-

AUSTIN: It’s crap. It is entirely crap.

WATKINS: So, entirely crap! So how do we get from entirely crap to good data that allows AI to do the stuff, the good stuff that we’re talking about?

AUSTIN: Every administration has been afraid to force police departments to audit their numbers—because of the power of police and police unions. You don’t put out any numbers that aren’t audited! And yet that’s what we do and that’s what we allow. And we have different definitions…

We don’t even have a general definition of recidivism! Recidivism can be committed a crime within one year, can be commit another crime within five years. It can be an arrest within one year, can be arrested within five years. All of these things are different. Why?

It’s not helping us. It’s not helping us to get to answers, but it’s this fear of questioning police officers. And then they basically say you’re forcing them to do something without giving them the money to do it.

And my thing was, what’s more important, honestly, public safety or employment? Or I’ll throw out there fantasy football. The best numbers are our fantasy football numbers! Our next best are employment numbers. Our worst numbers are our public safety numbers. That tells us what we think about actually getting it right on public safety, and that’s a real problem.

WATKINS: But then with us talking about the great stuff that AI could do in the sector, are we sort of whistling in the dark, then, since we’re seem to be agreeing that the good, clean data isn’t out there?

AUSTIN: Yeah, I think we are. But that’s the thing, and that’s the thing why you have to allow researchers in to actually start figuring this stuff out. If it’s important enough, I do think AI might be helpful in certain ways, but I don’t think we can just do it relying on the data that we currently have.

At the White House, they came up with this thing called the Police Data Initiative. And one thing we got a number of jurisdictions, I believe it was Lexington, Kentucky, was one of the first to do this: Every single police stop was listed in real time on their database for everyone to see. And it would say what the stop was for, what the result of the stop was, give a general location of the stop, length of the stop—all of this data and information that I think was incredibly important.

And we were trying to encourage more and more people to do it, because it said, “What are the police actually doing in your community? What are their interactions with the community members? Is it traffic stops? Is it broken-down vehicles? Is it drug interdiction?” Again, the police belong to the community, not the other way around. And so having that data out there was so important. And again, it was killed during Trump One. And so it definitely doesn’t exist now.

WATKINS: Right, and I mean, we haven’t even touched on the issue of the bias, the bias of the criminal legal system, that then gets reflected in the data. I was watching something put out by your Howard Law Institute. You had Tiera Tanksley, an academic, invited there to a recent event, who said that anti-Blackness is the default setting of AI.

AUSTIN: Yeah. Well, I mean, we know that! We know it from as simple as things as you ask AI to evaluate an image of Black men, and it sometimes comes back and says, “Well, those apes did such and such.” You have the idea of Meta wanting to, actually wanting to train its AI that if someone asks if Black people were dumber than white people for it to say, “Yes, Black people are dumber than white people.”

WATKINS: I’ve heard you say that before. And what is the thinking there that people asking that question are white people who want to be told that basically, and they want to keep them engaged?

AUSTIN: Well, that’s the nice thing. I mean, other than actually, do people actually believe that and want that to be the answer because that’s the truthful answer to those people, or is it that the sycophancy behind AI and the idea that each of these companies are competing against each other.

So, if Matt asked me a question, I don’t want to tell Matt that he’s a dumb-ass, I want to tell Matt he’s the smartest person in the world and I agree with his answer. We have this fantasy that there is a neutral in this world and that that’s what we are aiming for.

But there is no neutral.

We need to just acknowledge that and tell people, “When I build this, I’m going to build this based on the following biases: that all human beings are created equal, that everyone’s life is of value, that we want people to be able to succeed, that we want fairness and justice.” I want to build that.

WATKINS: With that story of Meta you just told in the background—and this goal of getting companies to see regulation as not just an obstacle, but as something that will improve their product—it just feels like that would really require changing the incentives for the tech companies. And I just wonder, and you’ve worked inside of one, how do we go about accomplishing that?

AUSTIN: I mean, look, it’s not about changing the incentives, in my opinion, because every business pretty much, or the vast majority of business, let me say, their north star is profit—and immediate profit: what is Wall Street going to say about what is done here?

And so it’s about creating real forced transparency, forced data collection, forced fixes, forced proof that you fixed accountability systems.

It’s about law and regulation.

It’s about saying, “You have to do this.” And again, I don’t want to limit technological advancements, but I do want to ask the question: what is our goal? Because sure, we can do things faster, but is that in and of itself enough and of enough value in this world? Just because we can do things faster? Yeah, we can pull in all this data—OK, to what end?

The builders, the tech oligarchs, they are looking for where the power sits and they’re going to build their product to benefit those in power. There’s nothing altruistic about what they’re building and there’s nothing magical about what they’re building. They can engineer it to provide a particular answer in a particular way.

At the end of the day, it’s still going to be human beings who are going to make these decisions. And if you make AI something that everyone relies on, then those people in power are going to use the AI to keep themselves in power, not to get to the right answer. And that to me is a huge, huge problem.

WATKINS: Right. And that’s where the role of government and regulation comes in. We’re looking in this country right now, the federal government recently issued an executive order trying to prevent states from really doing any regulation of AI. And, I believe, specifically targeting attempts to make laws around bias and artificial intelligence. I don’t know how-

AUSTIN: A real attack on Colorado, which is one of the first to really do bias. California tried it, and I believe that’s one of the things that Gavin Newsom had vetoed, but Colorado’s the one that has one that has passed and they still haven’t put in place because of the number of threats against them.

WATKINS: Do you feel some fatalism about all of this? I mean, it certainly seems like AI is here, it’s here to stay, we can regulate it, but it’s not going anywhere.

And I wonder if you feel like that and if you do, whether it means you draw a distinction between criticisms you need to make that because where the technology is causing genuine harm and maybe criticisms that are just sort of counterproductive because this thing’s coming down the tracks anyway.

AUSTIN: I mean, we can get into the kind of philosophical-existential conversation about why are any of us here on this planet and what should we do with our limited number of years here?

WATKINS: That sounds like a good question for an AI bot when we’re both done this call…

AUSTIN: And I’m sure someone will say, “Make a ton of money!”

WATKINS: …I’m sure we’ll get a good one.

AUSTIN: “Make a ton of money while you’re here! Have fun!”

Every fight I’ve been in has been bigger than me. It really has been. And this is just another one. And all I can do is hopefully make things at least a little better for whoever comes next.

And I look at it, and I have these pictures on my wall and those are very intentional. I did a lot of work in policing and the people who allowed me to have the incredible career that I’ve had so far went through much more than I am.

They weren’t sitting there on podcasts trying to advocate their role. They were out in the streets, and they faced real danger, physical harm. I mean, that’s Martin Luther King being arrested and John Lewis being arrested. And they’d be disappointed if we didn’t keep trying to move the world forward in a positive direction.

And then so that’s why we say bend the arc of AI toward justice. I’m not going to finish that bending, but can I do a little bit to help get it there? And so that’s my goal, that’s my fight.

Who knows where it’s going to be when my fight ends, but I don’t see any other real value in my being on this planet if I’m not trying to make it a better planet.

WATKINS: Right. I mean, this is the continuity in your career as sort of a skeptical institutionalist to go back to our opening question that, and again, AI for all its wonder-working claims is created by and for humans, so we can’t escape the human realm, and we’re still in this battle over fairness and power and truth. And that’s your continuity, I guess.

AUSTIN: Yeah. I mean, it’s always been, as I’ve said, when I first took on my job, I was prosecuting police officers and perpetrators of hate crimes. And you always thought, “Well, the impact’s going to be huge! You prosecute this police officer, the whole police department’s going to say, ‘Oh, wow, we got to change things!’”

And then you realize they’re like, “Oh, no, no, no. Pat was just a bad apple. We’re not like Pat,” and move about and just go about their day. And so you realize that you have that limited impact—till you start doing stuff where you do whole police departments.

And we worked on New Orleans, Maricopa County, and you have a bigger impact there, to working with the folks in the task force on 21st Century Policing where you’re touching all 18,000 police departments, to working at Meta where you have four billion daily users of its product.

So if you can impact that in a positive way, the impact that you’re having, to now both working to try to make the Metas and the Googles and the Claudes better, but also maybe create your own thing that can have an enormous impact.

But also, I’m at that stage of my life where I basically need to help the next generation to have these fights, and someone take on the fights in all of these companies, or in institutions, or in advocacy organizations and give them some tools that maybe they can have a huge impact.

But your hope is that you’re touching more people as you grow older, and that’s where I think I am right now.

WATKINS: So neither an AI boomer or an AI doomer? Somewhere in between?

AUSTIN: Somewhere in between. Somewhere in between. I mean, AI is here. It’s not going anywhere. Shoot, anything that writes your term paper for you for school is going to stick around for a long time. They couldn’t get rid of Cliff’s Notes when we were younger.

I think technology has some benefits, some significant benefits, but if it puts a bunch of people out of work and doesn’t give them something to do, and it lies to them all the time, and it’s controlled by people who don’t have the interest of humanity in mind, it’s not a good thing.

So how do we make it good and make it good at doing the things that we as a society really need?

WATKINS: Well, Roy, on that note, with no false sycophancy, let me just say thank you so much. I mean, first off, for your long and impressive and I think really important career, and for this not quite so long, but still quite impressive podcast interview, just very much appreciated, thoroughly enjoyed the conversation. Hope we can talk again soon.

AUSTIN: And Matt, just really appreciate you giving me this opportunity. This is fun. And I know you, in your day-to-day work, are also trying to just make the world a better place. And as modestly as we can do that, we push forward.

WATKINS: That was my conversation with Roy Austin, Jr. Roy is the inaugural director of the Howard Law Artificial Intelligence Initiative.

Roy is also the senior advisor to the new AI and Justice Consortium, of which we are a founding partner.

For more information about the Consortium and about the Center’s work on AI and justice, check out the links in your show notes, or go to innovatingjustice.org/newthinking.

For their help with this episode, my thanks to my colleagues Catriona Ting-Morton, Michaiyla Carmichael, Daniel Logozzo, Bill Harkins, Julian Adler, and Dan Lavoie. Thanks as well to Chris Edley at the AI and Justice Consortium.

Today’s episode was edited and produced by me. Samiha Amin Meah is our director of design. Emma Dayton is our V-P of outreach. And our theme music is by Michael Aharon at quivernyc.com.

This has been New Thinking from the Center for Justice Innovation. I’m Matt Watkins. Thanks for listening.